Channelnomics Says AI in Partner Management Cuts Deal Velocity by 40%. The Companies Hitting It Spent 2026 Reconciling Data, Not Buying Platforms.

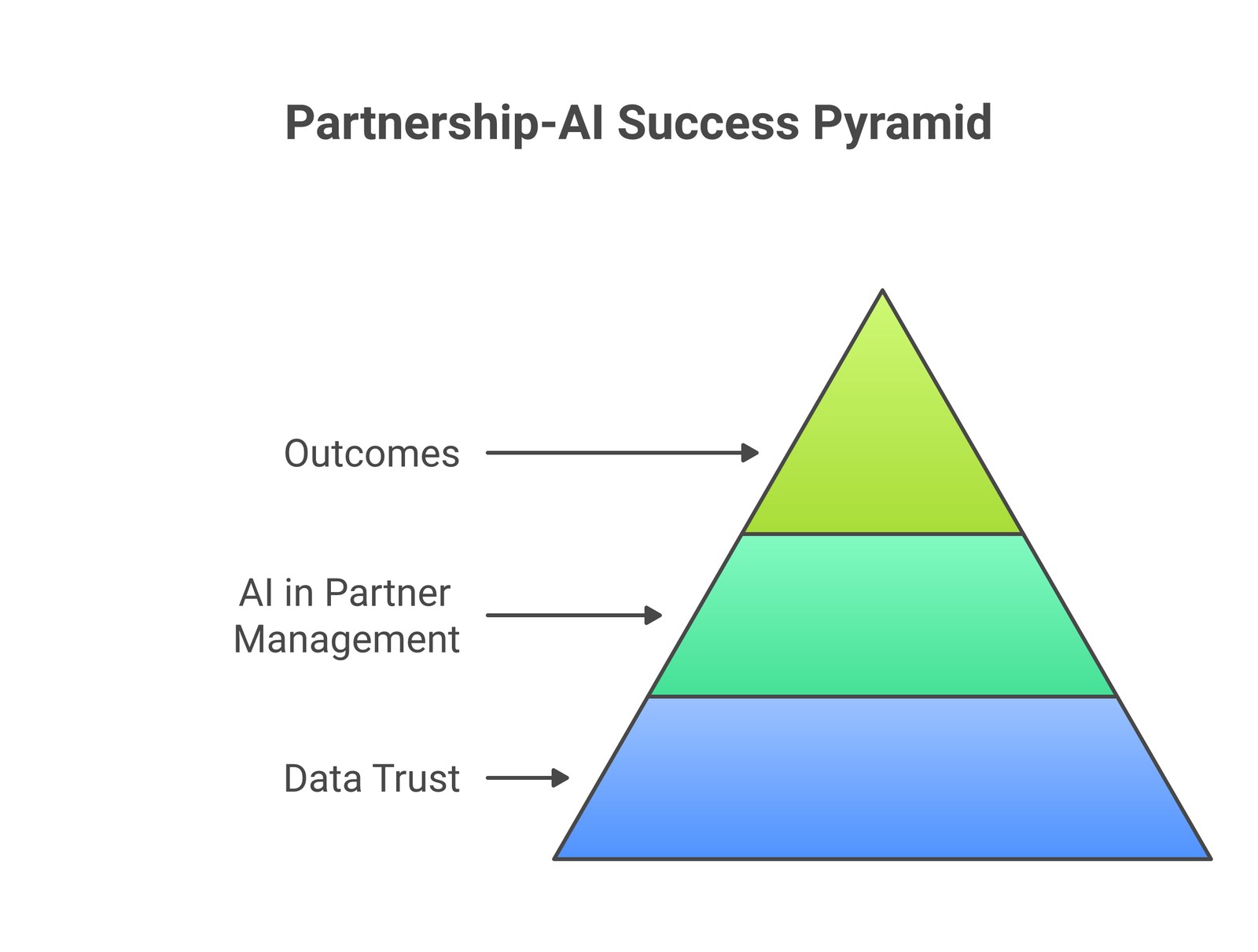

Short answer: Channelnomics reports that early adopters of AI in partner management see up to 40% faster deal velocity. However, that outcome is conditional on one prerequisite the citations leave out: data trust. Specifically, partner-attribution data, deal-registration data, partner-activity data, and joint-Slack data have to be reconciled before AI on top of them produces signal rather than noise. Therefore, the companies hitting 40% spent 2026 reconciling data, not buying platforms.

There’s a stat from Channelnomics that has been quoted in every partnership-AI conversation this year: early adopters of AI in partner management see up to 40% faster deal velocity.

Therefore, the number is real. The methodology is credible. Every partnership-AI vendor on the planet has it on their homepage right now.

What every citation of this stat leaves out is the prerequisite that determines whether your company gets to be one of the early adopters who hits 40%, or one of the early adopters who buys the platform and produces a year of expensive dashboards.

The prerequisite is data trust.

The 40% goes to companies that built the reconciliation layer first

Specifically, Bob Moore’s 2×2 Matrix of AI Data correctly identifies the moat: second-party data, the partner-shared, partner-consented data that competitors cannot reproduce. Every partnership platform vendor with an AI roadmap is building toward this conclusion. Crossbeam Lists, PartnerTap Co-Sell Engine, York Group’s Partner Forensics™, the Bridge Partners + SAP Power of 3 reference architecture all assume the second-party data layer is the foundation.

The assumption is correct. The problem is that the foundation, in most companies, is sitting on a sub-foundation that is dirty enough to collapse the AI use case before it produces a single revenue dollar.

By contrast, that sub-foundation is the partner-attribution, deal-registration, and partner-activity data that lives in your own CRM, your own PRM, your own deal-reg system, your own Slack channels, and the calendars of every rep who has ever touched a partner-involved deal. In most companies, every one of those sources is dirty in a partnership-specific way that the AI conversation isn’t accounting for.

Why partner data is dirtier than sales data

Sales data is dirty. Notably, everyone in revenue ops already knows this. But sales data is dirty in a bounded way, within one company, with one CRM as the system of record, with one comp plan rewarding accuracy, with one ops team owning reconciliation.

In practice, partner data is dirty in a federated way. The same deal exists in your CRM, your partner’s CRM, your deal-registration system, your partner’s deal-registration system, the joint Slack channel, two different sales engagement platforms, and probably a shared Google Doc somebody started at the kickoff and nobody has updated since. Every one of those sources has a slightly different version of what’s actually true. None of them is canonical.

There are four specific sources of dirt that any partnership AI investment will collide with within the first 90 days of deployment.

Notably, Source 1: Attribution conflicts between vendor and partner. Your CRM says the partner influenced the deal. The partner’s CRM says they sourced it. Your AE says she did the work. The partner manager says it was a co-sell. The deal closed; both sides agreed not to fight about it. Now the AI model is being trained on this data and cannot tell whether this was sourced, influenced, or co-sold. The model produces a confident wrong classification. The next quarter, leadership leans on the model’s output to make a strategic-resource-allocation decision. The decision is wrong because the input was wrong.

Source 2: Deal-registration logs written for political reasons. The deal-reg log is supposed to be the canonical truth on which partner gets credit for which deal. In practice, it is written by humans who are gaming a comp plan, managing a relationship, protecting a forecast, or hedging against a future audit. The result is a log full of registrations that are technically accurate, contextually misleading, and analytically toxic.

Crucially, Source 3: Partner-activity tracking fragmented across five systems. The actual signal of a healthy partner motion lives in a shared Slack channel, a recurring weekly call on the calendar, threaded email between two AEs, a joint customer meeting captured (or not) in Gong or Fathom, and a mutual action plan that may or may not exist in a shared document. None of these systems talk to each other natively.

Source 4: Overlap data feeds that include stale account names and bad email matches. The second-party data feed is built on account-name matching and email-domain matching across two CRMs that both have years of accumulated dirt. “Acme Corp” in your CRM matches “Acme Corporation Inc.” in the partner’s CRM with maybe 70% confidence. The AI says they match. The rep gets a notification. The rep follows up. The rep gets burned. The rep stops trusting the notifications.

Why “more AI” doesn’t fix dirty data

Indeed, every AI vendor in the partnership space is competing on output quality, better matching algorithms, smarter scoring, predictive next-best-action recommendations, conversational interfaces over the data. The competition is happening at the output layer because the input layer is unsexy, unbillable, and slow.

But the AI output is bottlenecked by the AI input. Information’s gone, you can go to ChatGPT for that. What you can’t go to ChatGPT for is reconciliation of conflicting attribution data across two companies’ CRMs, four years of accumulated deal-reg dirt, and five fragmented partner-activity streams. That work has to be done by humans, with judgment, before the AI sits on top of it.

Meanwhile, the AI vendors will not do this work for you, because it is not their work. They are selling models. The reconciliation has to happen inside your data stack, with your owners, on your timeline.

Bridge Partners’ BridgeIQ launch put the principle in one line: automation delivers scale; orchestration delivers impact. The same logic applies one layer down. Reconciliation delivers trust. Trust is the precondition for AI to produce anything other than confident noise.

What data trust actually requires

In fact, three commitments, in order, before any partnership AI investment will produce ROI.

Commitment 1: Pick one canonical attribution model and enforce it. Vendor-sourced versus partner-sourced versus co-sold is a real distinction with real comp-plan consequences. Pick the model. Write the rules. Train the team. Enforce the rules in the deal-registration workflow. Audit quarterly. Resolve disputes through a defined process, not through the manager who shouts loudest at the end of the quarter. This is comp-plan rewriting work. It is political. It is slow. It is the prerequisite to AI producing any meaningful attribution signal.

Most importantly, Commitment 2: One canonical deal-registration log with field-level validation. When the rep submits a registration, the system checks that the partner exists in your master data, the account exists in your CRM, the contact has a verified email, the deal stage matches your pipeline definition, and the partner-influence type is one of the canonical values. If any of those fails, the registration is rejected at submission. Not after the quarter closes.

Commitment 3: One canonical partner-activity stream with explicit source-of-truth designation. Joint customer meetings: source-of-truth is the call recording platform. Inter-company communications: source-of-truth is the joint Slack channel. Mutual action plans: source-of-truth is the shared document with version control. Email is signal but never source-of-truth. Pick the source per activity type. Wire it into the data pipeline. Stop pulling from secondary sources. Within ninety days, the dashboards converge.

However, these three commitments are unsexy. None of them is buyable. All of them are the precondition for the Channelnomics 40% number, and none of them is what the AI vendor demo emphasizes.

What this means for your team this month

Diagnostic one, the trust audit

Pull twenty random partner-influenced deals from the last two quarters. For each, reconcile the attribution between your CRM, the deal-reg log, and the partner’s claimed involvement. How many of the twenty produce three identical answers across the three sources? In most partnership programs we observe, the answer is fewer than five. That number is the data-trust ceiling. AI built on top of it cannot exceed it.

Diagnostic two, the source-of-truth audit

Therefore, pick five partner-activity questions you’d want a partnership AI to answer. Which partners had joint customer meetings in the last 30 days? Which partner-influenced deals are stalled? Which partner reps have we lost engagement with this quarter? Which mutual action plans are overdue on a milestone? Which overlap-flagged accounts have we actually contacted? For each question, identify the canonical source-of-truth in your stack today. If you can’t identify a single source-of-truth, that question cannot be answered reliably by AI until you fix the underlying source.

Score yourself out of five. The number is your AI readiness.

The Forecastable layer

Specifically, the 9-accelerator system across Strategy, Story, and Selling treats data reconciliation as a Strategy-axis prerequisite rather than a downstream workflow problem. Specifically, ecosystem leverage, one of the three Strategy accelerators, is named for exactly this layer. Ecosystem leverage means the data and systems underneath the partnerships are centralized, reconciled, and actionable instead of fragmented, contradictory, and noise-producing.

Ecosystem leverage is the accelerator that the Co-Sell Alignment Specialist role exists to operate against, a specialist function (typically offshore for unit-economics reasons) that owns the daily reconciliation work. Without the role, no platform investment will produce the Channelnomics number, because there is nobody whose job it is to keep the data clean enough for the platform to read. The Forward-Deployed Engineer role is the second piece, the technical owner who wires the source-of-truth designations into the data pipeline.

By contrast, these two roles, operating against ecosystem leverage as the Strategy-axis foundation, are how the partnership-AI promise actually closes. The platform purchase is the easy 20% of the work. The roles and the data discipline are the hard 80%.

Closing

If you have made a partnership AI investment in the last twelve months and the platform is producing more dashboards than deals, the AI is not the problem. The data underneath the AI is the problem. And the data underneath the AI is fixable, but not by the AI vendor.

In practice, the Channelnomics 40% number is real. The companies hitting it spent 2026 reconciling sources, designating source-of-truth, installing the Co-Sell Alignment Specialist role, and earning the right to point AI at clean data. The companies that skipped that work and went straight to the platform purchase are still in the dashboard phase, wondering why the AI investment isn’t producing pipeline.

That is the actual conversation worth having with your CDO and your CRO this quarter, before another year of platform investment produces another year of platform-investment disappointment.

Talk to our team about installing the data-trust layer underneath your partnership AI →

Related reading

- The second-party data architecture that ai sits on top of

- Buyers should ask for orchestration outcomes, not automation features

Sources

Forecastable is an independent third-party professional services company. Our evaluations of other vendors are based on publicly-available information as of May 2026 and our own client experience.

Uncover Your Growth Potential

Whether starting with a single sales team or a single partner, any co-sell motion can be live within 30 days.

Schedule a Discovery Call